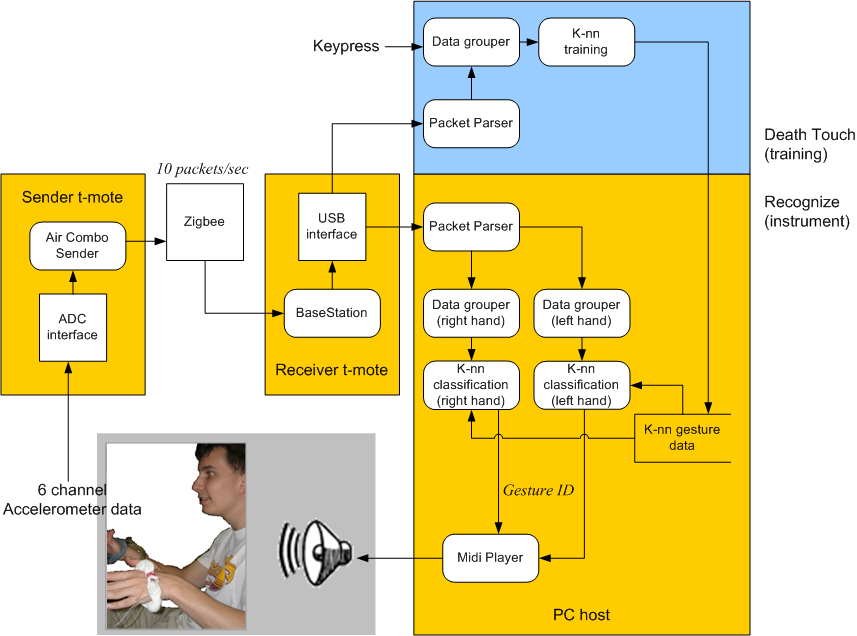

The sender Tmote polls the ADC for accelerometer data and sends it over ZigBee to the receiver Tmote.

The reciever Tmote sends the data over usb to a program that logs the data.

The recognition data looks to see from which Tmote the next packet came from, then runs KNN for that Tmote. Our KNN implementation works by treating 8 sequential accelerometer data points as a point in a high dimensional space. Many of these are collected and assigned gesture labels in the training process. Then, in actual use, a given accelerometer data is saved in an 8 long queue, which is then compared to the K nearest neighbors (determined by euclidean distance), and if those K neighbors have the same gesture label, that point is assigned that gesture. If at least one of the K nearest neighbors has a different gesture label or is too far (there is a distance threshold), then the point is assigned the non-gesture.

With the point assigned a gesture, a note is selected and sent to the Midi Player, which creates sound for the user.