ARS System - Performance Analysis - Phase I

Tests

Performed

1. Co-located fault free

WorkloadTest, WebLogic application server, God and Creditcard running

on the same machine; no other replicas; database server on a separate

machine on campus.

<<< Figure 1: graph co-located fault-free >>>

2. Distributed fault-free - communication baseline

Multiple machines:

- WorkloadTest on machine 1

- Goad and Creditcard on machine 2

- WebLogic application server on machine 3 (primary server)

- WebLogic application server on machine 4 (backup server)

- Database server on a separate machine on campus

A loop of 100 iterations was executed calling isAlive() on the server. This

call takes no arguments and doesn't do any processing or database

access. Therefore, the roundtrip time corresponds to the communication

time between client and server.

<<< Figure 2: graph >>>

3. Distributed fault-free

Multiple machines:

- WorkloadTest on machine 1

- Goad and Creditcard on machine 2

- WebLogic application server on machine 3 (primary server)

- WebLogic application server on machine 4 (backup server)

- Database server on a separate machine on campus

A loop of 100 iterations was executed. Each iteration would call three

business methods in sequence: getFlights(),makeReservation() and buyTickets().

In executions 52, 57, 81 and 83, the spikes are the result of a problem

in the database connection that caused a timeout and transaction

rollback on the server. Because the database is considered fault-free in

the context of this project, we'll not try to explain the spikes or

propose solutions or improvements to avoid them.

<<< Figure 3: graph >>>

4. Distributed with fault-injection

Multiple machines:

- WorkloadTest on machine 1

- Goad and Creditcard on machine 2

- WebLogic application server on machine 3 (primary server)

- WebLogic application server on machine 4 (backup server)

- Database server on a separate machine on campus

A loop of 100 iterations was executed. Each iteration would call three

business methods in sequence: getFlights(),makeReservation() and buyTickets().

After 20 executions, a fault was

injected on the primary server. After 180 seconds, a fault was

injected on the other server. From then on, a fault was injected every

180 seconds on alternate servers.

<<< Figure 4: graph >>>

Findings

and Conclusions

The results allowed us to conclude directly or indirectly:

- On the distributed scenario, response time is not as steady as in

the co-located scenario. Several aspects of the infrastucture present

non-deterministic response times and contribute to increase the variance

observed in Figures 2 and 3:

- Time to send a message using Ethernet and 802.11b (wireless) is

non-deterministic (message loss can also occur).

- Time for the database server to respond to a request can vary

based on current load and existence of cached data from previous

commands.

- Time to execute a user request in a J2EE server may vary based

on the ability to reuse an EJB or database connection from the

respective pool. Also, garbage collector can execute and interfere with

response times.

- Response times for transactions should be defined as average

values; it's not feasible to define worst-case values because of the

impossibility of "real-time" determinism.

- Communication overhead is the most time-consuming portion of a

user "roundtrip" operation.

- The first request to an application server after restart takes

longer than the average, probably due to internal initialization

procedures still going on and the need to fill up pools of EJBs and

database connections.

- Garbage collection on the application server consumes from 3 to 7

milliseconds. Given the frequency of the GC operation and the cost of

the network overhead, this does not appear to be a significant factor in

the overall response time.

Next Improvements -

Phases II and III

Performance of the system may be increased by taking the following

actions:

- Each EJB request in isolation requires 4 accesses to the

application server:

- JNDI lookup for the EJB, which returns the home object

(factory).

- A call to create()

on the home object to create an instance of the EJB, which returns an

instance of the EJB object.

- A call to the business method (e.g. makeReservation()) itself.

- A call to remove()

to inform the server that this client won't need that EJB instance

anymore.

Therefore, the total execution time of

a user operation can be reduced if we avoid some of the 4 calls

mentioned above. In the specific context of the ARS that is possible:

- Because we're using Stateless Session Beans, the call to remove() is not necessary. That

conclusion is intimately related to the understanding of how SLSB

operates interanally to the EJB container: a SLSB instance is drawn from

a pool of instances for each request. That instance executes the call

and returns the results to the user. Then, the container returns the

instance to the pool; SLSBs are not passivated and do not retain state,

so the call to remove()

doesn't alter the availability of instances in the pool.

- The EJB object returned by the create() call can be cached

inside the client application. Then, when a subsequent business call is

made to the same EJB, steps 1 and 2 are not necessary.

- In the CreditCard application, a connection to the database is

open for every call. We can use a long-term database connection and

avoid the time it takes to open a connection, which is very significant.

- In some cases, database access takes a significant amount of

time. We have identified some opportunities to optimize our SQL

statements, for example, by merging two select statements into one.

- The class at the client-side that handles communication with the

server maintains a list of available servers, which is obtained querying

the naming service when the client is initialized. When a request is

sent to a server and the request fails, that server is removed from the

list. When the list goes down to zero items, the naming service

is queried to obtain a refreshed list of available servers. Querying

the naming service impacts the total response time of the transaction.

To minimize this impact, we want to query the naming service when the

size of the list goes from two down to one element. The goals is to try

and avoid the situation where the client has no server to talk to,

which is the worst-case in terms of impact to execution time.

- Load-balance:

the current situation - without load-balancing - is as follows: when we

have primary server A and replica B, all client applications will send

requests to server A; server B will only get a request if server A

fails. If we distribute the load across all available servers, the

overall throughput of the system should increase and average response

time should decrease. Therefore, we plan to implement and test

load-balance by:

- Having the client call each available server in a round-robin

fashion. Thus, if client has servers A, B and C on the list of available

servers, it will make the first call to A, the second to B, the third

to C, the fourth to A, and so on.

- Making the very first call of each client execution to a random

server among the servers available. The goal is to avoid the situation

where each client execution makes just one or two calls to the

server (this is an unlikely but possible operational profile); in that

case, there would be a concentration of requests on server A (on the

first servers of the list in general).

- Creating a script that generates the synthetic workload

corresponding to 20+ simultaneous clients.

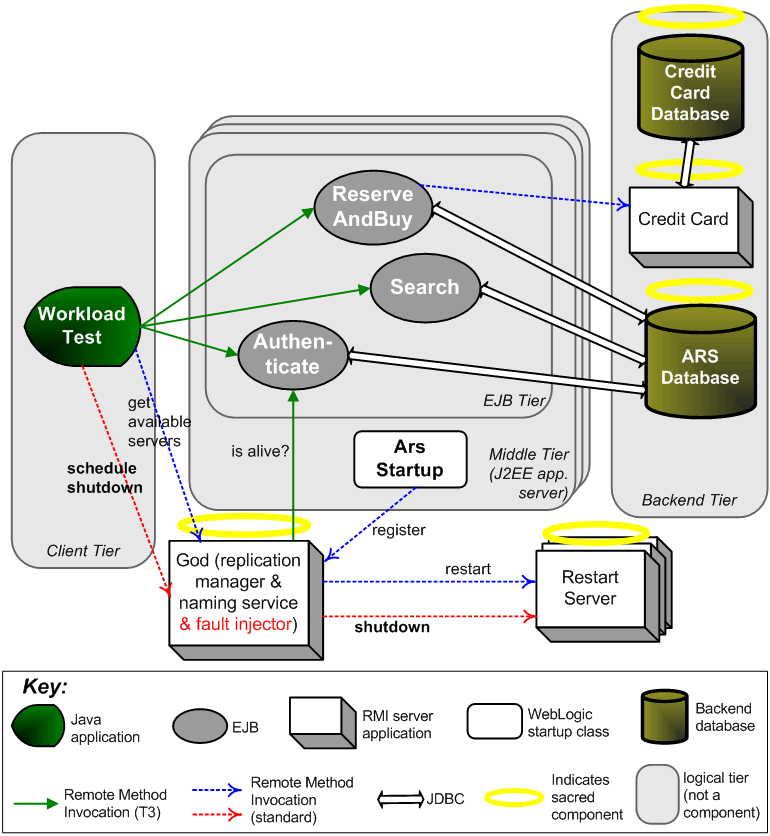

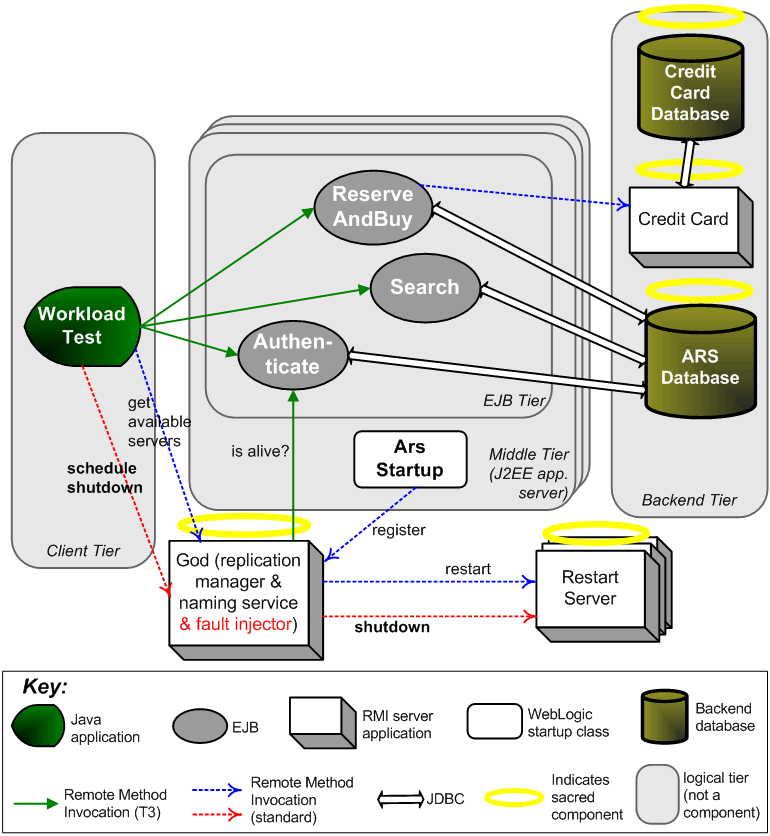

About the Fault Injector

God application is the replication manager and naming service for the

ARS system. For performance analysis, the role of fault injector was

also given to God.

The following method was added to God's interface:

scheduleShutdown(long

interval)

This method would spawn a thread for each registered application server

replica. The thread would periodically invoke stopServer() on RestartServer,

which is an RMI application that runs on each machine where a replica of

the application server is running. See the red connectors in the diagram

below.

RestartServer is responsible for restarting the application server in

case of failures, but in the fault injection context, it was used to

kill the application server instead. It would do that by calling a

script (forceShutdown.cmd)

that would interrupt the execution of WebLogic application server on

that machine.